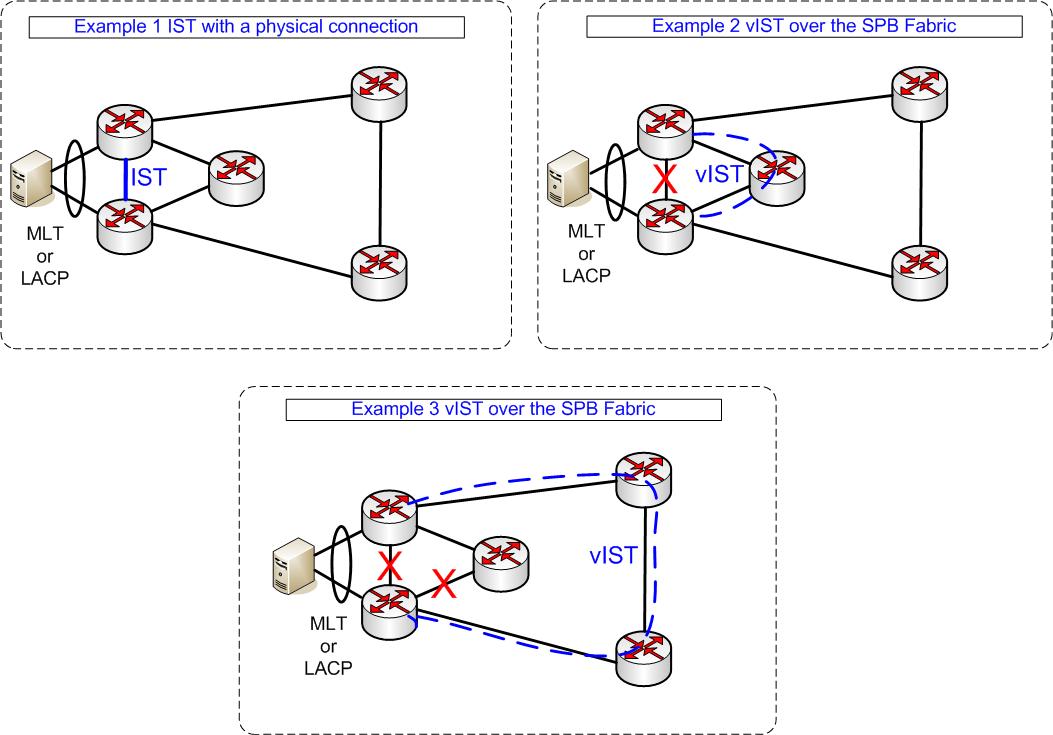

A decade ago Nortel introduced with the SMLT technology the first Multichassis Link Aggregation Technology. Today most of the major Vendors have a multichassis link aggregation technology in their portfolio like VSS / VPC from Cisco or IRF from HP. For me it was the end of spanning tree in the network core. A multichassis link aggregation provided the two main benifits of active active load balancing and fast failover times. At the beginning the technology was used a lot to connect switches with each other. It was also a very elegant way to connect servers to the network, you can provide additional bandwith to the servers and avoid a single point of failure in the network. In a datacenter, where all servers have 2 connections to two different switches with a multichassis link aggregation you can have a failure on one device or do a software update and everything is still running. Besides all the good points it comes with some limitations. Depending on the vendor and technology it is only possible to do a multichassis link aggregation across 2 or 4 devices. In most cases a lot of manuell configurations are needed to setup the links. And there was the limitations of a physical link between the two switches that shared the endpoint connection of your mutichassis link aggregation. The worst case here was when the direct connection between the two switchcluster member had a failure and you ended with a split brain situation, wich results in a complete network outage. Avaya has now introduced the virtual IST for their VSP8000 switches that elimantes the need of a physical connection between two switches that can be configured to form up a multichassis Link Aggregation or SMLT in Avaya terms. When you have a SPB fabric based network it is only needed that the two switches are connected via SPB to the fabric. That provides extra flexibilty for your deployments as well as better redundancy.

A decade ago Nortel introduced with the SMLT technology the first Multichassis Link Aggregation Technology. Today most of the major Vendors have a multichassis link aggregation technology in their portfolio like VSS / VPC from Cisco or IRF from HP. For me it was the end of spanning tree in the network core. A multichassis link aggregation provided the two main benifits of active active load balancing and fast failover times. At the beginning the technology was used a lot to connect switches with each other. It was also a very elegant way to connect servers to the network, you can provide additional bandwith to the servers and avoid a single point of failure in the network. In a datacenter, where all servers have 2 connections to two different switches with a multichassis link aggregation you can have a failure on one device or do a software update and everything is still running. Besides all the good points it comes with some limitations. Depending on the vendor and technology it is only possible to do a multichassis link aggregation across 2 or 4 devices. In most cases a lot of manuell configurations are needed to setup the links. And there was the limitations of a physical link between the two switches that shared the endpoint connection of your mutichassis link aggregation. The worst case here was when the direct connection between the two switchcluster member had a failure and you ended with a split brain situation, wich results in a complete network outage. Avaya has now introduced the virtual IST for their VSP8000 switches that elimantes the need of a physical connection between two switches that can be configured to form up a multichassis Link Aggregation or SMLT in Avaya terms. When you have a SPB fabric based network it is only needed that the two switches are connected via SPB to the fabric. That provides extra flexibilty for your deployments as well as better redundancy.

Assuming you have already a SPB based configuration here are the steps that you need to configure a virtual Inter Switch Trunk (vIST)

First we need a vIST VLAN and an I-SID with a L3 interface

vlan create 4000 name "V-IST" type port-mstprstp 1 vlan i-sid 4000 40004000 interface Vlan 4000 ip address 10.1.1.2 255.255.255.248 0

Now we configure the peer IP , wich is the IP from the partner device with that you would like to form up a virtual IST connection

virtual-ist peer-ip 10.1.1.3 vlan 4000

In the SPB config you also need a virtual bmac and the smlt peer system id from the partner device. Note with this extra virtual bmac you suck up one extra ID from your ISIS maximum devices. The limit was with SW 4.0.0.0 on the VSP8k 500 Backbone MACs.

spbm 1 smlt-virtual-bmac 00:00:00:00:10:ff spbm 1 smlt-peer-system-id 0010.0001.0003

When you have configured the two pairs of your switchcluster and they have a connection to each other your vIST should be up and running

sho virtual-ist

================================================================================ IST Info ================================================================================ PEER-IP VLAN ENABLE IST ADDRESS ID IST STATUS -------------------------------------------------------------------------------- 10.1.1.3 4000 true up NEGOTIATED MASTER/ DIALECT IST STATE SLAVE -------------------------------------------------------------------------------- v4.0 Up Slave

To use a multichassis link aggregation / SMLT across your vIST you need to configure a MLT with type SMLT. Avaya has now 512 IDs available for SMLTs, so you configure always an MLT and gives it the attribute SMLT. The old SLT for Single Port SplitMultiLink Trunks are no longer needed. The configuration looks like that:

interface mlt 2 smlt

The only thing that is important here is the MLT ID wich has to be the same on both peer nodes of your switchcluster.

At the moment the virtual Inter Switch Trunk is only supported on the VSP 8k, but I suggest we will see it in the near future on other Avaya VSP switches.

Hi

Very good examples above, thanks for that. I have a question regarding the config line:

spbm 1 smlt-virtual-bmac 00:00:00:00:10:ff. I have an running SPBm Network with VSP4k on VOSS 5.1.1.1 (latest VOSS) and i want to get SMLT vIST Cluster, as they configured the Fabric the Engineer didnt set the BMAC manually on the nodes. if i now configure SMLT, do i need to run this virtual bmac command or will the two nodes do that by them self?

Best Regards

Thomas

Hi Thomas,

I would prefer always to configure the vitual bmac.

It is possible to use a simplified configuration.

If you have plans to have multiple vIST in the same SPB network it

is better to have customized virtual bmacs that you know and control.

Cheers